What Are AI Hallucinations? Causes, Risks, and How Businesses Can Avoid Them

Introduction

Artificial intelligence is transforming industries, but it is far from perfect. One of the biggest challenges businesses face today is AI hallucination—when AI systems generate incorrect, misleading, or completely fabricated information. As organizations increasingly adopt AI through AI software development companies, understanding these risks becomes critical. In this blog, we explore what AI hallucinations are, why they happen, the risks they pose, and how businesses can avoid them.

What Are AI Hallucinations?

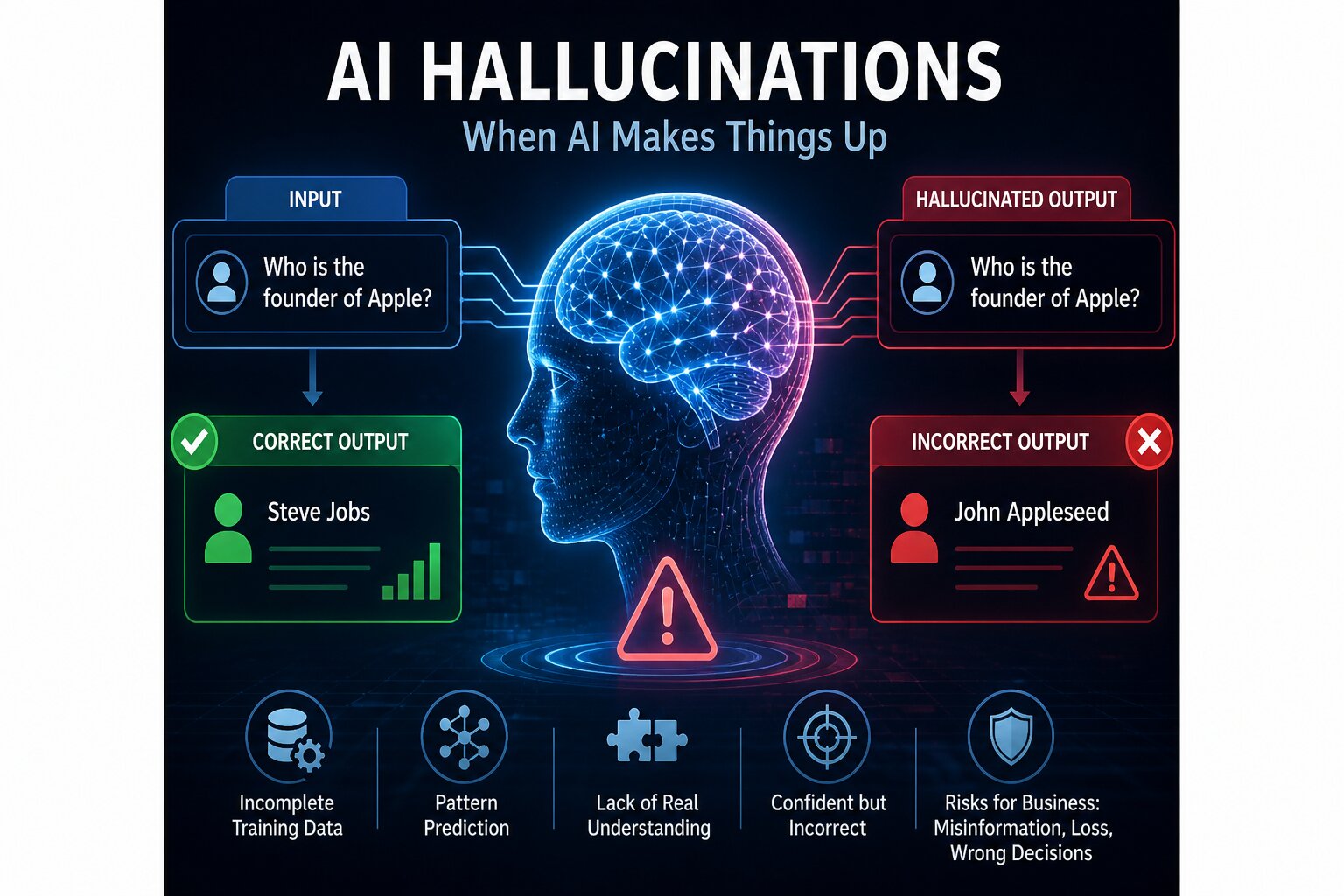

AI hallucinations occur when artificial intelligence models generate outputs that are incorrect or not grounded in real data. These responses may appear confident and accurate, but they are often fabricated or misleading. This happens because AI models predict responses based on patterns rather than true understanding.

Why Do AI Hallucinations Happen?

AI hallucinations can occur due to several factors including incomplete training data, biased datasets, lack of real-time knowledge, and over-optimization of language patterns. Models do not truly understand context—they generate responses based on probability, which can sometimes lead to incorrect outputs.

Real-World Examples of AI Hallucinations

AI hallucinations have been observed across industries. Chatbots may provide incorrect medical or legal advice, content generation tools may produce false facts, and automated systems may generate inaccurate insights. These examples highlight the importance of validation and oversight.

Risks of AI Hallucinations for Businesses

For businesses, AI hallucinations can lead to serious consequences. These include misinformation, poor decision-making, loss of customer trust, compliance violations, and reputational damage. Companies investing in digital transformation services must address these risks proactively.

Impact on AI Automation and Decision-Making

AI hallucinations can undermine automation systems by producing unreliable outputs. In critical workflows such as finance, healthcare, or customer support, incorrect AI responses can lead to costly errors. Businesses using AI automation must ensure proper safeguards.

How Businesses Can Prevent AI Hallucinations

Organizations can reduce hallucinations by implementing human-in-the-loop systems, using high-quality training data, applying validation layers, and monitoring AI outputs continuously. Following cloud infrastructure best practices also ensures better system reliability and control.

Best Practices for Building Reliable AI Systems

To build trustworthy AI systems, businesses should focus on explainability, regular audits, feedback loops, and secure deployment. Partnering with experts in bespoke solutions helps create customized AI systems with reduced risks.

The Future of AI Reliability

As AI continues to evolve, improving accuracy and reducing hallucinations will become a top priority. Advances in model architecture, governance frameworks, and monitoring tools will play a key role in making AI systems more reliable and trustworthy.

Conclusion

AI hallucinations are one of the biggest challenges in modern AI systems. While AI offers incredible opportunities, it also requires careful implementation and oversight. By understanding the causes and risks of hallucinations, businesses can take proactive steps to build reliable, secure, and trustworthy AI solutions.